Summary: Some blind individuals possess a “superpower” known as echolocation, using the echoes of their own mouth clicks to navigate the world. A new study published has finally mapped how the brain processes these sounds.

By comparing expert echolocators to sighted individuals in total darkness, the team found that the brain doesn’t just “hear” an object—it accumulates information across a sequence of clicks. Each additional click acts like a brushstroke, building a high-resolution mental representation of the surroundings in real-time.

Key Facts

- Expert Precision: Four blind expert echolocators significantly outperformed 21 sighted individuals at identifying object locations in a pitch-black room.

- The “Build-Up” Effect: Neural activity in the brain strengthens with every successive click. The more clicks an expert makes, the more accurate their “vision-by-sound” becomes.

- Information Summation: Lead researcher Haydee Garcia-Lazaro found that the brain uses “summation” to combine repeated sound information into a stable spatial map.

- Behavioral Correlation: The accuracy of object location improved linearly with the number of self-generated mouth clicks, proving that echolocation is an active, iterative process.

- Future Training: The study suggests that echolocation is a skill that can be trained in both blind and sighted individuals by engaging specific neural pathways used for spatial representation.

Source: SfN

Some blind people use returning echoes from their own mouth clicks to perceive external surroundings, or echolocation.

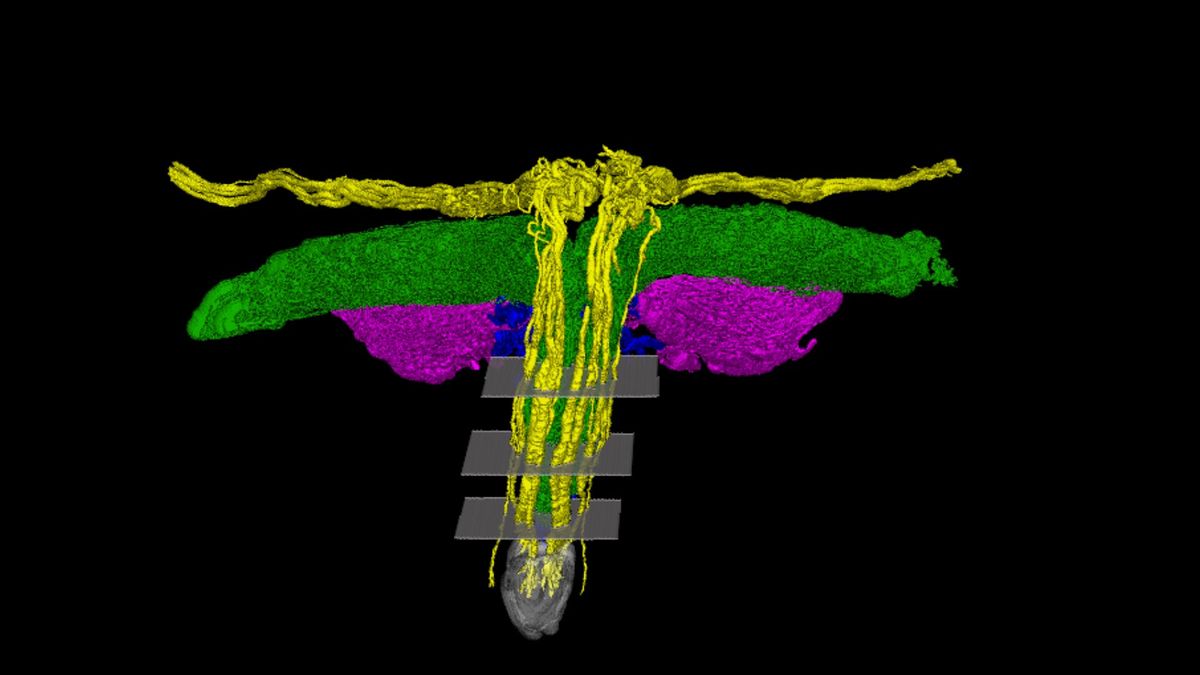

New from eNeuro, Haydee Garcia Lazaro and Santani Teng, from Smith–Kettlewell Eye Research Institute, explored how the human brain creates representations of external surroundings using echolocation.

The researchers first discovered that four blind individuals comfortable with using echolocation could identify object location better than 21 people with vision intact in a dark room. Accuracy at using echolocation improved with more self-generated mouth clicks in these expert echolocators.

The researchers also linked neural activity in the brain to the ability of blind individuals to determine object location. This activity, alongside behavioral measures, strengthened across click sequences, leading to more accurate object location.

Says Garcia-Lazaro, “Basically, we found that, in some experts, there appears to be a summation, or accumulation, of information in the brain that builds up across clicks about object location.”

According to the researchers, this work shows how the brain uses repeated sound information to create representations of the environment in the absence of vision.

Garcia-Lazaro expresses excitement about the next steps stemming from this work, including determining what makes blind people adept at echolocation and training people with and without sight to engage their echolocation ability.

Key Questions Answered:

Q: Do echolocators actually “see” the objects in their minds?

A: In a way, yes. While they are using sound, the brain often repurposes the visual cortex to process this spatial information. This study shows that the brain is essentially building a “3D model” click-by-click, which allows experts to sense the distance, size, and even the texture of objects.

Q: Why don’t sighted people have this ability?

A: We actually do have a “latent” version of it! Sighted people in the study were able to do it, just much less accurately. Expert echolocators have “neuroplastic” brains that have been tuned through years of practice to prioritize these subtle echoes, whereas sighted brains usually ignore them in favor of light.

Q: Can anyone learn to echolocate?

A: The researchers believe so. Because they identified a specific neural “summation” process, it opens the door for training programs. Just as you can learn to play an instrument, you can likely train your brain to “listen” for the build-up of spatial data from your own clicks.

Editorial Notes:

- This article was edited by a Neuroscience News editor.

- Journal paper reviewed in full.

- Additional context added by our staff.

About this visual and auditory neuroscience research news

Author: SfN Media

Source: SfN

Contact: SfN Media – SfN

Image: The image is credited to Neuroscience News

Original Research: Closed access.

“Neural and Behavioral Correlates of Evidence Accumulation in Human Click-Based Echolocation” by Haydée G García-Lázaro and Santani Teng. eNeuro

DOI:10.1523/ENEURO.0342-25.2026

Abstract

Neural and Behavioral Correlates of Evidence Accumulation in Human Click-Based Echolocation

Echolocation enables blind individuals to perceive and navigate their environment by emitting clicks and interpreting their returning echoes. While expert blind echolocators demonstrate remarkable spatial accuracy, the behavioral and neural mechanisms by which spatial echoacoustic cues are combined across repeated samples remain less explored.

Here, we investigated the temporal dynamics of spatial information processing in human click-based echolocation using EEG. Blind expert echolocators (n=4, all males) and novice sighted participants (n=21, 12 males) localized virtual spatialized echoes derived from realistic synthesized mouth clicks, presented in trains of 2–11 clicks. Behavioral results showed that blind expert echolocators significantly outperformed sighted controls in spatial localization.

For these experts, localization thresholds decreased as the number of clicks increased, a pattern consistent with cumulative integration of spatial information across repeated samples. EEG decoding analyses revealed reliable neural discrimination of echo laterality from the first click that correlated with overall spatial localization performance.

Across successive clicks, neural responses evolved systematically, reflecting sequence-position–dependent changes in neural dynamics. EEG trial-level modeling further allowed us to distinguish accumulation-consistent decision readout policies from alternative repetition-based accounts, revealing individual differences in decision policies among expert echolocators.

These findings provide, to our knowledge, the first fine-grained account of the temporal neural dynamics supporting human click-based echolocation, directly linked to behavioral performance across multiple samples.

They reveal how, in expert echolocators who successfully performed the task, successive echoes are progressively integrated into coherent spatial representations, demonstrating adaptive sensory processing in the absence of vision.

English (US) ·

English (US) ·