Follow ZDNET: Add us as a preferred source on Google.

ZDNET's key takeaways

- Using local AI is responsible and private.

- GPT4All is a cross-platform, local AI that is free and open source.

- GPT4All works with multiple LLMs and local documents.

As far as AI is concerned, I have a few rules for myself: It's not for creative purposes, it's not to be used as a writing crutch, and whenever possible, go local.

That's a fairly straightforward list, which, to date, hasn't been all too challenging to adhere to. For the longest time, I've opted to go with Ollama because it's local, runs on all desktop platforms, and is open source.

Recently, however, I came across another open-source tool that has some really cool features. That tool is GPT4All.

GPT4All is released under the MIT License and can be installed and used on Linux, MacOS, and Windows for free. It includes all the usual features, such as the ability to add multiple LLMs, follow-ups, no API calls or GPUs required, full customization, local document chat (LocalDocs), and support for thousands of models.

Also: I tried a Claude Code rival that's local, open source, and completely free - how it went

I decided to give GPT4All a try and see how it stood up to Ollama. Much to my surprise, it won me over pretty quickly.

Let's get GPT4All installed and see what's what.

How to install GPT4All

If you're using MacOS or Windows, GPT4All is very simple to install. Just download the associated installer file from the GPT4All site, double-click the downloaded file, and walk through the installation wizard.

If you're using Linux, things get a bit tricky. First of all, GPT4All offers an installer just for Ubuntu-based distributions. I first attempted to install GPT4All on Pop!_OS. When I did that, I received an error that it couldn't find the necessary Qt libraries.

OK, then. It's KDE Plasma time.

Also: I tried to save $1,200 by vibe coding for free - and quickly regretted it

I fired up a Fedora VM with KDE Plasma and ran the installer. Nope. This time, it was a missing X11 library that caused it to fail.

Fine. I gave it one more try, this time with KDE Neon. That did the trick, and in less than a minute, I had GPT4All running on KDE Neon. I'll offer this one warning: Do not attempt to install GPT4All with admin (sudo) privileges, as it will fail every time. Download the installer file, give it executable permissions with chmod u+x gpt4all-installer-linux.run, and then launch the installer with ./gpt4all-installer-linux.run.

Also: How I made Perplexity AI the default search engine in my browser (and why you should too)

Once installed, it doesn't matter which platform you use because the GUI and feature set are the same across the board.

How to use GPT4All

Loading a model

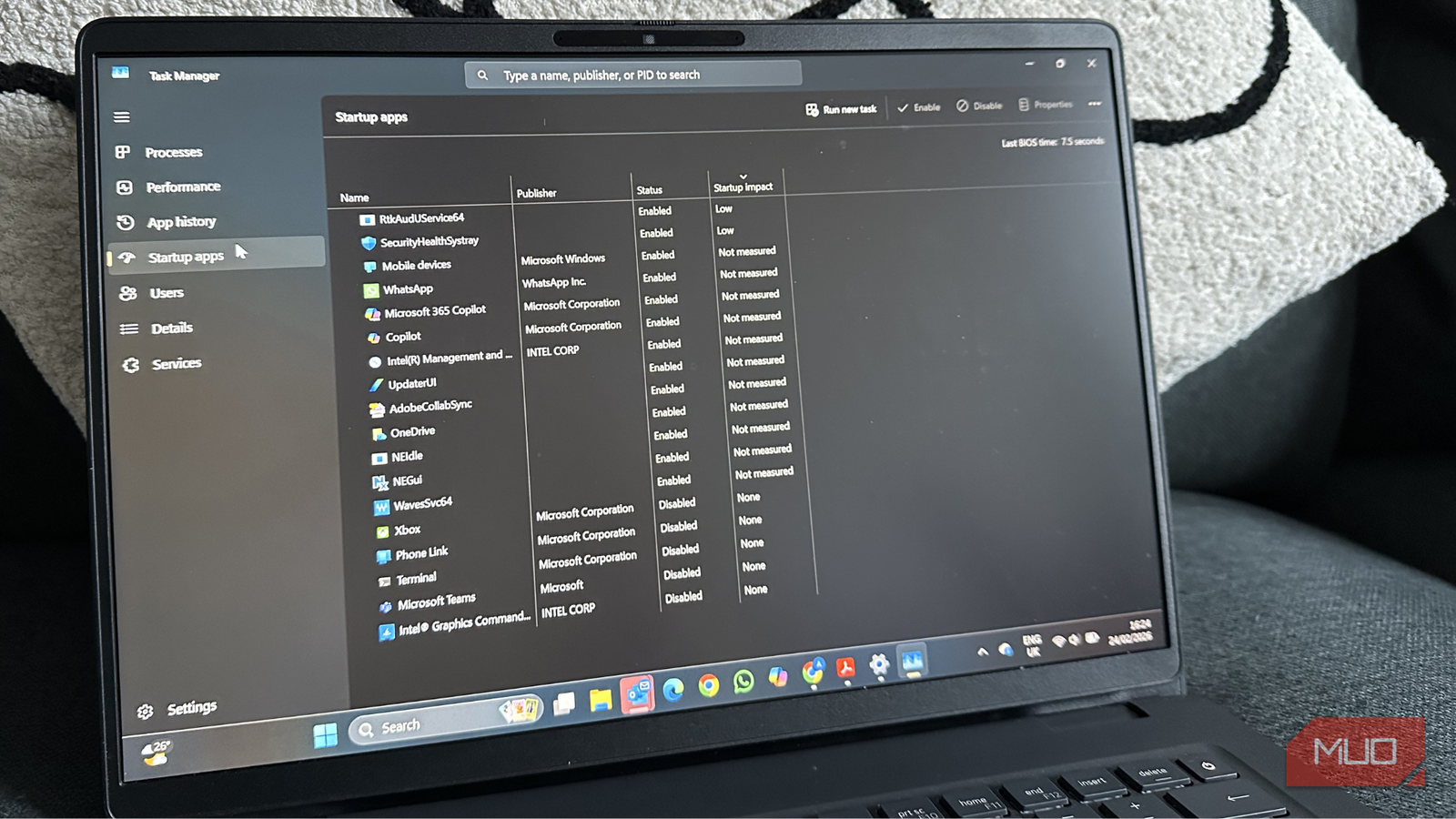

If you've used any AI, especially local AI, you'll feel right at home with GPT4All. The GPT4All GUI is very well laid out, such that you'll be able to take advantage of all its features out of the gate.

Also: I tested local AI on my M1 Mac, expecting magic - and got a reality check instead

However, you do have to add a model before you start. To do that, click Models in the left sidebar and then click Add Model.

GPT4All offers a very clean and easy-to-use UI.

In the resulting window, locate the model you want to add and click Download.

You can add as many models as you need.

After the model has been downloaded, click Chat in the left sidebar, and then click Load to load the LLM you just downloaded. At this point, start sending your queries, making sure to verify the validity of the results.

I downloaded the GPT4All Falcon model because I'd never tried it. When I asked my usual first question ("What is Linux?"), the response surprised me.

I typically receive a verbose answer with tons of details, some of which are incorrect, about Linux. This time, however, I received a two-sentence reply. Succinct.

Also: This free MacOS app is the secret to getting more out of your local AI models

I could also follow up with something like, "Is Linux good for desktop usage?" The answer:

"Yes, Linux is a good choice for desktop usage. It has a wide range of applications, and it can be customized to meet the specific needs of individual users."

Take that, haters!

Adding your own docs

One feature that you might enjoy having is the ability to load your own documents and use them in your queries. For example, you might have written a tome of articles, theses, monographs, etc., and want to be able to sum them up, combine them, and use them for research. Here's how you do that.

Also: This is the fastest local AI I've tried, and it's not even close - how to get it

Before you do this, you should collect all of the documents you want to add into a dedicated folder. Once you've done that, click Local Docs in the left sidebar. In the resulting window, click Add Doc Collection.

Getting ready to load my own documents into GPT4All.

I did a very simple test with this feature. I added my teaching resume to a new collection and asked the GPT4All Falcon model, "What are Jack Wallen's teaching credentials?"

To my surprise, it failed and only mentioned my Linux-related experience, which is nowhere to be found on that particular resume. I decided to remove the Falcon model and go with a llama model instead.

Also: I tried the only agentic browser that runs local AI - and found only one downside

Yep. That worked, and worked very well. Lesson learned… always choose the model you need according to the task to be done.

My new local AI?

As much as I hate to step away from Ollama, it looks like I might have a new local AI app, at least for MacOS. Until the developers make it possible to install the app on non-Qt-based desktop environments, I'll have to stick with Alpaca on Linux.

Also: My two favorite AI apps on Linux - and how I use them to get more done

Come on, devs… do us Linux people a solid.

English (US) ·

English (US) ·