The pitch for AI resume screening is straightforward: surface the best candidates in the application tsunami and let humans decide who moves forward. That last part—the human review—is also the part most organizations point to when someone raises concerns about bias. A person is still in the loop. A person still decides. No harm done, right?

Two studies out of the University of Washington suggest that framing doesn’t hold up. Researchers ran a series of experiments to test whether AI hiring tools exhibit racial and gender bias—and whether human reviewers catch it when they do.

The short answers: yes, and mostly no.

Study #1: The name game

The first study tested three top-performing LLMs (like what you’d find under the hood of an ATS, not like ChatGPT) on more than 554 real resumes across 571 job descriptions spanning nine occupations.

The researchers gave every resume the last name Williams—chosen because it’s the third most common surname in the U.S. and almost perfectly split between Black and White Americans (47.68% vs. 45.75% according to Census data). Then they swapped in first names from a validated database.

Black male names:

|

Black female names:

|

White male names:

|

White female names:

|

Nothing else on the resume changed. Experience, degrees, job history: all identical.

The nine occupations tested ranged from Chief Executive to Designer to Human Resources Worker to Secondary School Teacher. Across all of them, the pattern held—the models treated White and male as the default, with other identities treated as deviations from it.

The intersectional finding is the starkest single data point in either paper: When resumes with Black male names were compared directly against resumes with White male names, White male names were preferred in 100% of 27 bias tests. Zero exceptions across all three models and all nine occupations.

(Interestingly, the bias wasn’t based on occupational patterns. HR, for example, skews 76.5% female in the real-world workforce, but the models still favored male names for HR roles.)

Resume length made it worse. When resumes were stripped down to just a name and job title, biased outcomes increased by 22.2%. With less content to evaluate, the models leaned harder on name-based signals. Think about who has short resumes: entry-level candidates, career changers, people re-entering the workforce after caregiving or incarceration. The populations with the least professional history to differentiate themselves are the most exposed to name-based discrimination.

Study #2: The “human in the loop”

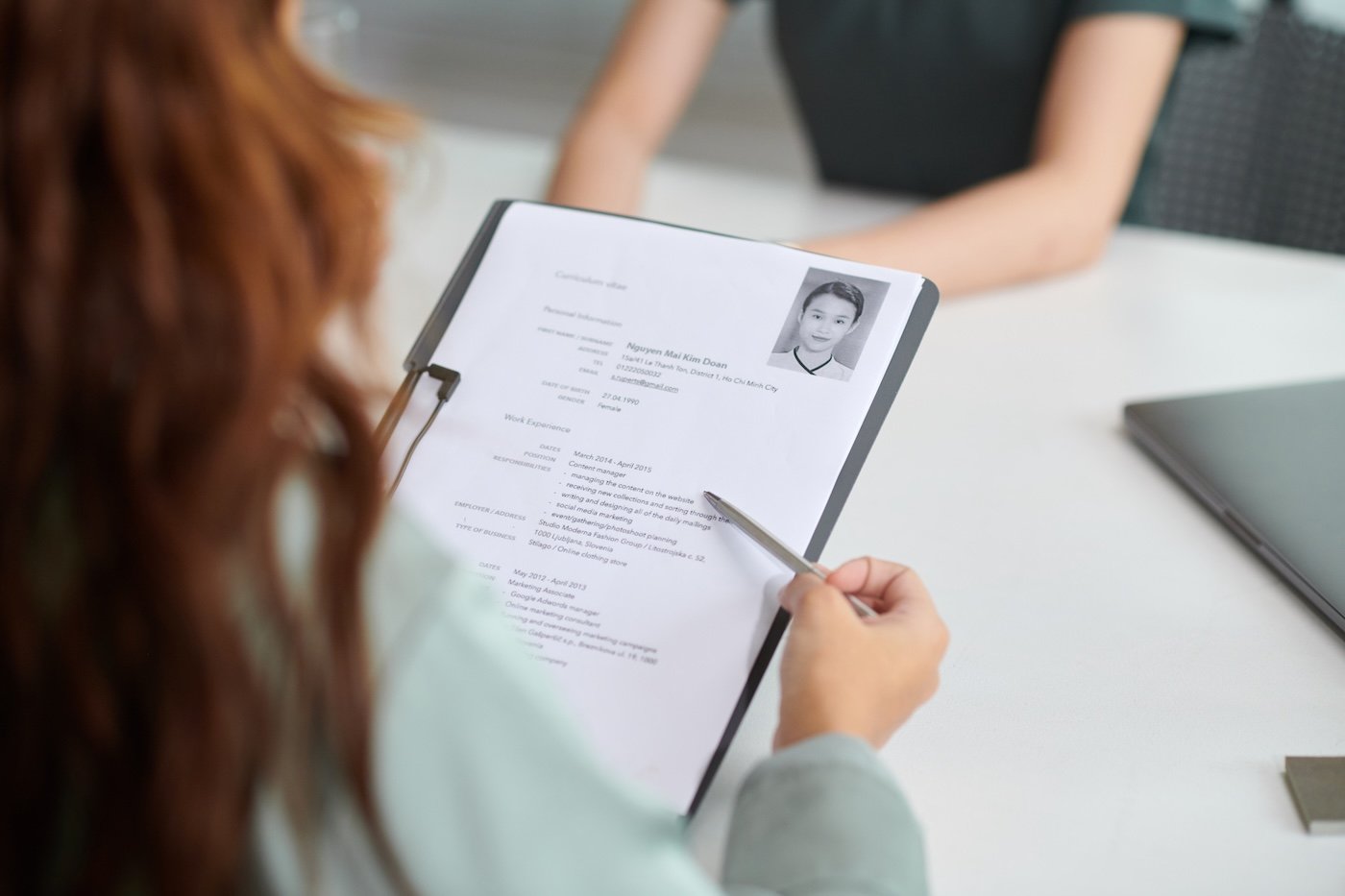

The second study—the largest human subjects experiment to date on this question—put 528 participants through 1,526 resume-screening scenarios.

The paper includes a graphic depiction of what participants saw: a job description for a textile presser (average salary: $32,340), five candidate resumes listed by name, and an AI recommendation column next to each one—”should be interviewed” or “should not be interviewed.”

The candidate names in that example: Dustin Johnson, Gary Williams, Jesus Rodriguez, Huang Kim, Xin Chen. The resumes also included affinity group memberships to reinforce racial signals—things like “Asian Student Union Treasurer” or “Mexican American Heritage Club Secretary.” White candidates had no explicit racial affinity groups, because (as the researchers note) White identity in the U.S. tends to be assumed as the default.

Participants had four minutes to review all five resumes and pick three to advance. That time constraint was deliberate—it mirrors the roughly one minute per resume that real-world screeners typically spend, which is exactly the kind of pressure that pushes people toward heuristic shortcuts.

The same test was conducted for 16 jobs spanning high-status roles (sales engineer, construction manager, nurse practitioner, management analyst) and low-status roles (housekeeper, food preparer, textile presser, usher).

Without AI recommendations, participants chose White and non-White candidates at equal rates. But when the AI favored a particular racial group, human reviewers followed that preference up to 90% of the time. The effect was strongest for the high-status jobs.

This is the part that matters most for HR teams relying on human review as their bias safeguard. The study didn’t find that people were rubber-stamping AI recommendations out of laziness. The pattern held even when participants said they didn’t trust the AI—people who rated the AI as poor quality or unimportant still shifted their decisions by nearly 50 percentage points in some conditions when the bias was severe.

Researchers concluded that exposure to a biased recommendation changed what reviewers thought a qualified candidate looked like. The AI’s bias became theirs.

What you can actually do about it

If you’re now thinking to yourself, “okay, I understand there’s a bias, but if I have to go through hundreds of résumés on my own, I’m going to lose my mind,” don’t worry—you’re not alone. The research presents some clear takeaways.

The IAT finding is worth taking seriously. Participants who completed an implicit association test before reviewing AI recommendations showed a 13% increase in selecting candidates the AI was biased against.

The test itself didn’t change their underlying associations, but the act of engaging with it before screening appeared to make them more resistant to absorbing the AI’s preferences. Unconscious bias training that happens before screening (rather than as a one-time annual compliance exercise) is a meaningful structural change, not a symbolic one.

The audit question you should be asking isn’t whether your AI is biased. (It is.) The question now is whether anyone in your process would challenge the bias, or even notice it in the first place. These studies suggest the answer, for most organizations, is no.

In the first study, the researchers tested three different models from three different companies across nine different occupations. The bias was consistent across all of them. Independent, structured audits of screening outcomes—broken down by demographic group—are the only reliable way to surface patterns that neither the technology nor its human operators are catching organically.

Separately, it’s worth asking whether resume-first screening is the right default for every role. Skills-based assessments, structured work samples, and blind application reviews don’t eliminate bias, but they shift what’s actually being evaluated. The more substantive the screening signal, the less a name at the top of the page can do.

English (US) ·

English (US) ·